Large Language Models (LLMs) are increasingly used for tasks other than summarizing, translating, or rewriting text: Customer support software can analyze sentiments and route support requests, digital assistants can speed up onboarding with Q&A over the team’s knowledgebase, and engineers can use LLMs to quickly work on and understand large codebases.

Foundation models are powerful tools for generalized tasks, but they are limited to the public knowledge supplied in the training stage. This knowledge cutoff is complicated by the limited context window inherent in current SotA LLMs, as any added context must fit within the context window.

While 80% of Fortune 500 companies have tried out ChatGPT at least once, we believe that distrust in LLMs caused by hallucinations is a significant barrier to large-scale enterprise adoption down the road. Luckily, hallucinations can be reduced effectively using Retrieval-Augmented Generation (RAG).

With RAG, additional domain-specific context is infused into LLMs as part of a prompt (in-context learning) to aid the LLM in coming up with better completions. To enable RAG, documents are typically transformed into a vector representation (embeddings) ahead of time to quickly find the most relevant data using semantic search.

Frameworks like Haystack, LangChain, and LlamaIndex simplify the process of building LLM applications by offering building blocks for techniques like RAG, but we’ve noticed a surprising absence of production readiness in the broader ecosystem, especially in the data integration stage.

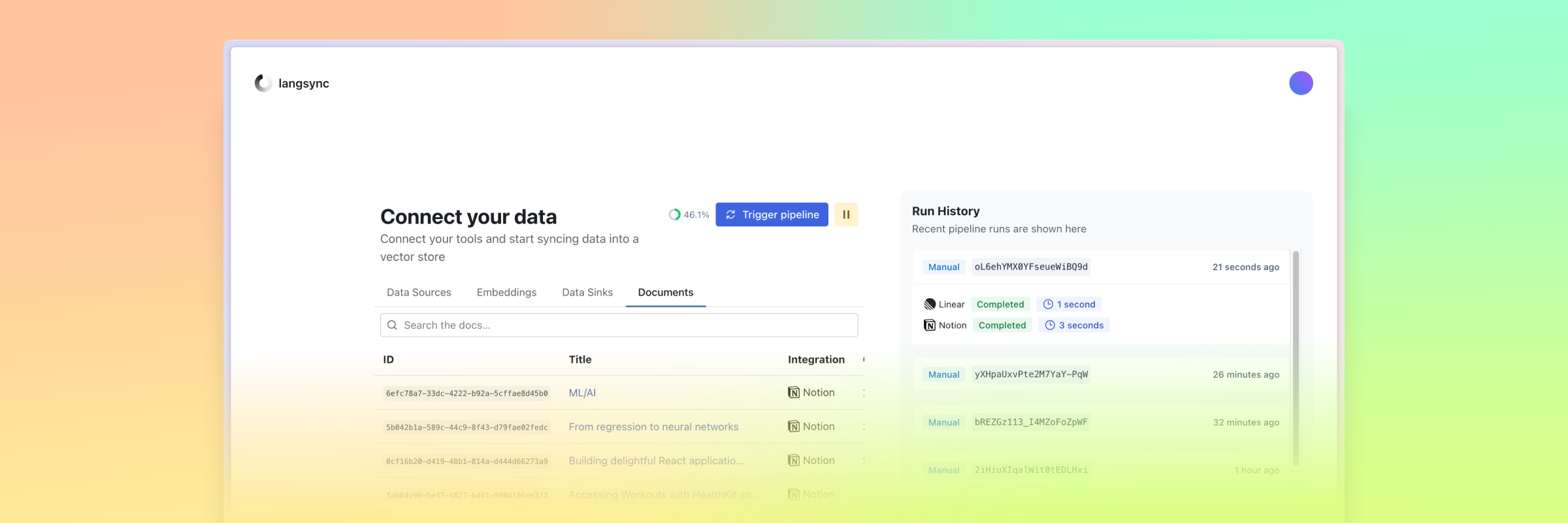

Today, we are excited to announce langsync, a fully open-source product to sync data from your team's tools to your LLM applications in real time so you can focus on creating value for your team and customers. Sign up to langsync for free today and start chatting to your team’s data in under five minutes, guaranteed!

Background

LLMs as reasoning engines

While LLMs were originally designed to improve translation performance, increasing the model size yielded interesting properties that we might carefully describe as the emergent capability to reason. Reasoning and task-solving capabilities have been demonstrated on exams and evaluations, and we assume large improvements in performance over the coming years.

While this area of research is steadily evolving, the need for context is evident when building well-performing applications.

Context is key

The costs for training current SotA LLMs are in the range of tens of millions of dollars in GPU resources, which makes continuously updating models financially and computationally infeasible. Fine-tuning offers relief in some areas but is largely restricted to improving the performance of a model in certain scenarios (e.g. producing SQL from natural language instructions, only outputting JSON, analyzing sentiment and returning the emotion, and other related ideas). Fine-tuning can provide guardrails for a model to create a more predictable output, as well as running faster than larger models like GPT-4 at a fraction of the cost while keeping similar levels of performance in specific tasks, but it doesn’t solve the problem of limited and outdated context.

RAG is a powerful, yet simple technique to boost completion performance on domain-specific information by providing data as part of the prompt. This way, fresh data can be supplied just in time to generate more accurate answers.

Knowledge as a vast multi-modal data collection

While transformer-based LLMs primarily work on encoded text (tokens), knowledge exists in far more media formats, including images, videos, PDFs (with images and tables), document scans (invoices, archives), CSVs, Excel sheets, PowerPoint presentations, and more. On top of this multimodal ocean of data, chances are that documents exist in many languages, each of which may use intricate business jargon and include language-specific quirks.

In addition to documents, software tools store increasing amounts of knowledge in an ever more fragmented ecosystem of issue trackers, knowledgebases, CRMs, source code repositories, and HRIS products. Collecting all this context requires a solid infrastructure that can handle large volumes of constantly changing data from various sources.

Data for LLMs comes in infinite shapes

While it’s easy to think about data in a document-centric way, there are many ways to integrate external data into LLM applications. Using feature stores, teams can load data from databases and other sources into a versioned environment from which application developers can then request specific features. Similar to data science workflows, feature stores like Fennel.ai provide a central repository for all data available within an organization.

Alternatively, fetching values from a database and including them in prompt templates encoded as JSON or using another format achieves a similar effect, helping developers to include fresh data in their prompts.

With the concept of agents, LLMs are given access to functions to retrieve certain data. This approach has been popularized with ChatGPT Plugins and Function Calling for developers using the API. This way, given the current context, the LLM decides which data it should fetch before coming up with the final response. While requiring multiple round-trips and LLM invocations, passing functions allows for the greatest flexibility at the downside of requiring developers to provide the respective API calls. ChatGPT’s Advanced Data Analysis (formerly known as Code Interpreter) shows the power of an LLM feedback loop to get to the final result.

LLMs can already translate prompts to SQL, which is then run on a database to retrieve the requested data (a process that can be automated). Fine-tuning foundation models helps to harden models for specific use cases, ensuring the model generates more deterministic text styles or output formats.

Lastly, RAG works well on unstructured data like messages, documents, web pages, knowledgebase articles, and other text content of any size. Using an indexing pipeline, unstructured data are retrieved from a source, transformed to comply with downstream components, encoded to their vector representation using a neural network, and ingested into a data store supporting similarity search with approximate nearest neighbors (ANN) using cosine similarity or Euclidean distance.

Indexing unstructured data for RAG

To find the most relevant context for a specific query, all existing knowledge must run through an indexing pipeline. In the first step, documents are retrieved, including their textual representation. This might be easy for documentation articles or chat messages but may be harder for files like PDFs which are notoriously hard to parse (though recent progress in computer vision and frameworks like Apple’s Vision framework have helped).

Once the text content has been retrieved, it must be split into chunks to prevent overflowing the LLM context window. There are many different approaches to splitting text, which would go beyond the scope of this guide. Each chunk should be able to be included in the prompt on its own without losing important context, otherwise, retrieval wouldn’t help at all. At retrieval time, documents can be loaded fully or as chunks and then optionally post-processed to fit the context window by summarizing, refining, or ranking using the LLM itself, among other strategies.

After splitting the original document, embeddings must be generated for each chunk. Together with the original chunk text and document metadata, the embeddings are then inserted into a vector store. The vector store maintains an index of the vector space to quickly perform semantic search operations on embeddings generated from a query against the embeddings generated from the document chunks.

Getting data into LLMs isn’t production-ready

As you can see, going from retrieving text content from dozens of data sources to querying your data involves many steps, each of which justifies its own field of research and optimization. Current solutions are functional prototypes at best, requiring manual data exports, copy-pasting tokens around, and hoping the data provider doesn’t change their API.

While it’s easy for anyone to build a simple retrieval application using popular libraries and integrations, taking it to production requires a lot of work including retrying when upstream providers fail, gracefully responding to rate limits, scaling up or down to handle changing demand, monitoring performance, and more.

We expected at least a handful of solutions for real-time managed embeddings of everyday software like Notion, GitHub, Linear, and others but found that most work is currently happening at the retrieval stage, with far less attention spent on the indexing pipelines.

There are some ETL for LLM tools, but they are either not companies focused on LLMs or ignoring real-time syncing altogether. This way, you’re depending on a vendor whose revenues are completely detached from your use case and you won’t get fresh data when it’s available. Other projects offer chatting with your data, which will be properly integrated into ChatGPT Enterprise any week now. Embeddings as a service are clearly not quite a category yet, and products have yet to announce first-party vector search integrations, though that would be an interesting direction.

Retool recently announced their new AI product, which offers a managed no-code AI application builder with managed vector stores, but seemingly did not include integrations with the actual third-party data sources for the first iteration.

We’ve searched long and hard, but couldn’t find a robust solution to connect our tools and pipe data straight into our vector database of choice in real time, so we set out to build it.

Why langsync?

langsync offers a much faster time-to-ROI for engineers building internal tools and LLM apps using their teams’s data. Making relevant context accessible is just one authorization away, and content will flow into your vector store within minutes after signing up.

While we could have made langsync incredibly configurable, we decided to strip away all the friction inherent in indexing context and left the two most options up to you: Data sources to connect and data stores to send your content to. That’s it. Whenever a supported data source detects a content change, your embeddings are updated less than 10 seconds later. With langsync, you can be confident that your LLM apps are never running on stale data again.

And the best part? You can self-host and extend langsync to work just how you need it to. We’re fully open source, and we’d love to get your feedback on the next data sources to build, which embeddings to support, and most importantly, how you’re using context in your LLM applications!

Product development

We initially planned langsync to be the WorkOS for LLMs, making it easy for products to use their customer's data for building powerful LLM experiences, but we focused on the integration story first and may follow up on the platform feature on demand. What we ended up with was a simple end-to-end real-time indexing pipeline from data sources to data sinks, designed for LLM applications.

We built the product around the pipeline concept, offering a configurable data stream with built-in document transformations and embeddings before ingesting the vectors into vector stores. Data sources are mainly integrations with third-party tools like Notion, GitHub, Linear, and Slack but could be expanded to documents and files uploaded manually or synced from other locations. For each data source, text splitting options could be configured, but we chose to stick with a proven default recursive character text splitter with 4000 characters per chunk and an overlap of 200 characters.

Embeddings are currently using OpenAI Embeddings and can be made configurable in a future release. Current data sinks include popular vector stores like Pinecone, Weaviate, Milvus, and Qdrant.

Engineering

The biggest challenge in building langsync was designing a reliable infrastructure around pipeline runs, ensuring fresh data and graceful retries of failing runs. Implementing the actual embedding and vector store ingestion logic took no more than three hours, while the infrastructure foundation took multiple days of the two-week cycle.

Finding the right abstractions

In the beginning, we roughly knew where we wanted to go but we hadn’t solved all the steps to get there, especially around how we wanted integrations to work, and how much configuration we wanted to expose. We quickly settled on the concepts described above with configurable pipeline inputs (sources) and outputs (sinks), while hiding the remaining complexity for most users.

All it takes to build another data source integration is to set up the OAuth integration flow, configure a document retriever to list and retrieve all existing documents shared with the OAuth application and write a content retriever for document text and key-value metadata. For integrations supporting webhooks, a webhook receiver route has to be configured. All of this takes about two hours max, as the heavy lifting is already handled in the background.

Development flow and tech stack

As with PromptCanvas, our last product, we use Next.js for the application frontend and backend, with a Vercel-managed Neon Postgres instance for persistence. We might switch to upstream Neon eventually, as we used a big chunk of our quotas just to build langsync.

We built the indexing worker, our main service, with Go as a reliable foundation for indexing thousands of documents. This immediately gave us great primitives around concurrency (Goroutines), context cancellation, timeouts, and more.

After retrieving the document content in the worker, we need to transform and ingest documents into the final data sinks, so we built a small Python service using LangChain components for text splitting, embedding, moderation, and finally vector store insertion.

AWS SQS backs the queues used for decoupling the worker from the backend, supporting long-running jobs with visibility timeouts. Due to the idempotent nature of the indexing process, we didn’t need stronger guarantees but could have used FIFO queues for exactly-once processing. We also considered Temporal in the selection process but didn’t have the time budget to set up a self-hosted version.

Building the application with hot-reload supported by Next.js and the Flask debug server saved lots of time, we tunneled webhook messages using ngrok and used the new AWS SQS Redrive functionality to retry failed messages that were moved to the DLQ. We tested the system end-to-end and each component in isolation through the endpoints and queue interfaces.

Ensuring Production readiness

Building a reliable experience requires thinking about certain scenarios upfront, including scalability, resilience against unintentional and intentional harmful behavior, budgeting, and observability.

Scaling the worker

We designed our infrastructure around the decoupled worker service pulling new index jobs out of the queue and processing them while periodically sending back a health-check to prevent message redelivery while the original worker is still running. We only receive one message per virtual worker at a time and each physical worker instance is split up into four virtual worker Goroutines. We can easily scale virtual and physical worker instances up or down to handle changes in system load.

Gracefully handling failure

As most of the work happens during the pipeline runs, we focused on ensuring that failing operations would be retried gracefully, if possible. Permanent errors are reported back to the user.

Being nice to third-party services

With many requests sent to third-party data sources, we designed our system to respect rate limits and other service quotas. We didn’t want to degrade the performance of external systems, so we capped the throughput to external services in each worker, as well as gracefully handling rate limits and retrying with exponential backoff. This way, we won’t run into a situation where our infrastructure practically runs a DoS attack against an external provider just to fetch the latest data.

Full observability

To make sure no important error falls under the table, we added monitoring to all components in the stack. We track transactions (requests to the API, pipeline runs in the worker, ingestion operations in the Python helper service, etc.) as well as one-off events (user signed up, user suspended, etc.).

Service quotas

As we offer managed embeddings using the OpenAI Embeddings API, we had to limit the maximum spend per user to a reasonable amount. We also wanted users to bring their big documents without annoying limits to enjoy the full power of langsync, so we chose a trade-off between two quota dimensions: All-time document syncs and all-time token count.

Each time a document is synced from a source and ingested into the vector store, we count this as an indexed document. And for every successfully indexed document, we count the embedded tokens. The token limit is only increased for changed documents, so there’s no additional cost of indexing documents that have not changed since the last sync. This way, we can control our budget spend while giving users a generous amount of usage before hitting the limits.

Protection against malicious content

To prevent any content violating the OpenAI Usage policies, before embedding, each chunk of text is sent through the Moderation API to verify it does not contain harmful content. Whenever harmful content is detected, the account is suspended permanently.

Crafting a solid UX

We knew from the start that the best experience required the system to just work. Users didn’t want to figure out failing pipelines or deal with other friction in any way, so we spent most of our time pushing indexing work into the background, surfacing updates to the user in real time.

The final dashboard is quite minimal, offering the essentials like connecting, reconnecting, and disconnecting integrations, as well as configuring data sources and viewing the pipeline run history. In most cases, the system will gracefully heal itself when errors are transient, in the rare case that we cannot fix it, we’ll report the failure back to the user.

Using OAuth for the connection process meant langsync is far easier to set up and get started with than any existing alternative, some of which still require users to manually export all their Notion data to Markdown.

What we learned

While unstructured data represents a big chunk of knowledge, context is usually more personalized than documents. For most applications, there is a split between retrieving data from the perspective of a user or their workspace. For an issue tracker, you might want to pull in tickets assigned to the current user. For enterprise search, you might want to request all issues. This distinction can be made in metadata but it’s not the most efficient solution.

We believe that unstructured data is important for a very specific class of use cases like semantic search and Q&A applications, but giving LLMs access to third-party APIs or interfaces like SQL will create better results in personalized scenarios as well as fetching only the required data (which removes the need for chunking strategies for most applications). Unfortunately, not all current models support these agent capabilities, and even supported models can hallucinate API calls that don’t exist. Continued investment in steerability will ultimately enable more powerful integrations between LLMs as reasoning engines and external data sources.

Finally, it is very hard to generalize multiple data sources into one product. Ingesting data in this one-size-fits-all approach works for some use cases, but giving developers more control over how data is integrated is critical in the long run.

With all this said, we believe that future LLM applications will make use of multiple data sources, be it unstructured data with semantic search, API/SQL access for LLM agents, and using feature stores and manually inserting context into prompts. Models will become more steerable and will follow instructions more closely, fine-tuning is a cost-effective way to this end.

LLMs will become commonplace and autonomous

While LLMs are still mostly used for one-off tasks, with more context and steerability, they have the potential to power more parts of SMB and enterprise businesses, ranging from software development, customer support, HR, and other functions that can be automated. Larger LLMs can be used as reasoning engines and with more work to reduce hallucinations, they can work on trusted information infused from external sources.

This makes the data you collect and make available to your LLM applications as important as the model you choose. Thin wrappers on top of public providers won’t create any value if applications require all context that exists within companies, and outdated context will severely hurt the usefulness of LLM applications.

Putting all the pieces together, if we want to run LLMs in autonomous scenarios and on bigger tasks, we need to provide fresh, personalized, and relevant context as text from every available source of knowledge. With langsync, we have solved one piece of the puzzle, and we’ll work on the remaining parts of the lifecycle in upcoming issues.

Try it out!

Starting today, you can sign up to langsync and start syncing your team’s data into your vector store of choice for free! If you’d like to run langsync on your own infrastructure, we have prepared a guide in our repository. We would love to get your feedback on additional data sources and data sinks to support, feel free to contribute! If you have any questions, join our Discord server.

Thank you for reading the second issue of Gradients & Grit. If you enjoyed this issue, please consider subscribing to the newsletter to be the first to know about new issues and other updates. If you have any open questions, suggestions, or feedback in general, please join the Discord community!

In the coming weeks, we will dive deeper into integrating LLMs into your applications, best practices for making your AI-enabled software production-ready, and more. Stay tuned!

langsync would not have been possible without the help of the following people: Malte Pietsch and Dino Omanovic.